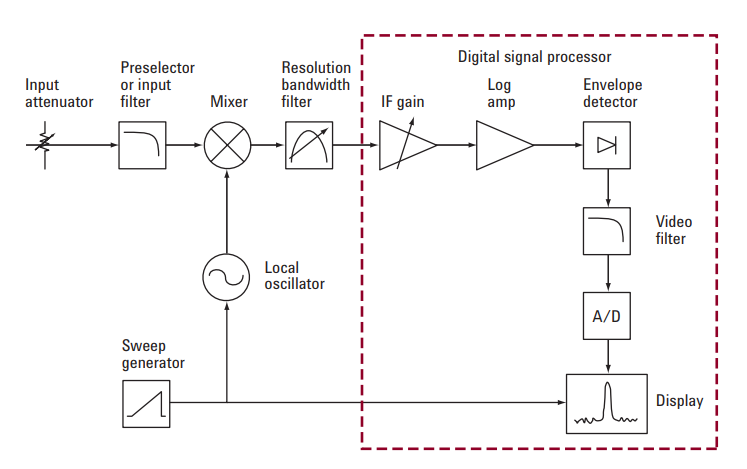

The spectrum analyzer, like an oscilloscope, is a basic tool used for observing signals. Where the oscilloscope provides a window into the time domain, the spectrum analyzer provides a window into the frequency domain. To get better spectrum analyzer measurements the input signal must be undistorted, the spectrum analyzer settings must be wisely set for application-specific measurements, and the measurement procedure optimized to take best advantage of the specifications. More details on these steps will be addressed in Keysight hints guide.

http://literature.cdn.keysight.com/litweb/pdf/5988-5677EN.pdf?id=1000000437:epsg:apn

Hint 1. Selecting the Best Resolution Bandwidth (RBW)

Hint 2. Improving Measurement Accuracy

Hint 3. Optimize Sensitivity When Measuring Low-level Signals

Hint 4. Optimize Dynamic Range When Measuring Distortion

Hint 5. Identifying Internal Distortion Products

Hint 6. Optimize Measurement Speed When Measuring Transients

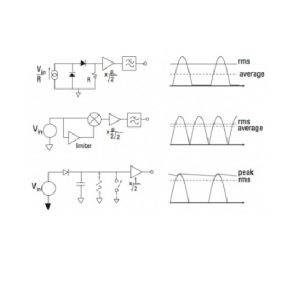

Hint 7. Selecting the Best Display Detection Mode

Hint 8. Measuring Burst Signals: Time Gated Spectrum Analysis